Electronic Journal of Academic and Special Librarianship

v.7 no.3 (Winter 2006)

Electronic Journal of Academic and Special Librarianshipv.7 no.3 (Winter 2006) |

|

Jenna Ryan, Reference Librarian and Virtual Reference Coordinator

Louisiana State University, USA

jryan1@lsu.edu

Alice L. Daugherty, Reference and Instruction Librarian

Louisiana State University, USA

adaugher@lsu.edu

Emily C. Mauldin, Graduate Assistant, School of Library and Information Science

Louisiana State University, USA

emauldi@lsu.edu

Virtual reference is an important service provided by the Louisiana State University Libraries.Ā A subcommittee within the Reference Department of Middleton Library decided to quantitatively and qualitatively review virtual reference transcripts for the 2005-2006 school year in order to assess and evaluate strengths and weaknesses of the services provided.Ā The transcript analysis provides information reflecting how our patrons are using virtual reference and how our librarians are performing in the virtual environment.Ā

Virtual reference has redefined reference services by extending the scope of the traditional reference desk and allowing distant patrons the access and ability to create dialogue with a librarian when in need of assistance.Ā Virtual reference is important for patrons who need quick instruction on how to find a piece of information or a quick refresher on how to use a database.Ā Several articles reflect upon implementing and developing a virtual reference or chat reference service and it is obvious that virtual reference is no longer considered a novelty service.Ā There is a need to evaluate the service quality from the viewpoint of the service providers. The primary focus of this research is to assess (even anecdotally) the quality of the service provided to patrons. Specifically focusing on the question types received and the quality of service transactions during the academic year 2005-2006.Ā

Patrons are becoming more accustomed to communicating with online services.Ā This is evidenced by the rapid growth in the use of virtual social networking sites such as MySpace or Facebook.Ā Patrons are ōnet-savvyö and have familiarity with connecting to information through wikis, blogs, RSS feeds, and other technologically enhanced formats.Ā The virtual reference set-up lends itself to the university mindset, in that the patrons can manage multiple online tasks and still carry on an effective synchronous chat with a librarian. Virtual reference is an important mainstay within library services.Ā We must make it as efficient and user-friendly as possible. According to JanesÆ summation of virtual reference, ōour services could be vibrant, making the best use of new technologies and the collective wisdom and expertise of skilled librarians around the world, providing high-quality services, educating people about information and its evaluation and use and how to help themselves more effectivelyö (2003: 199).

Virtual reference at Louisiana State University was implemented in the fall semester of 2002.Ā The LSU LibrariesÆ Virtual Reference committee established for the project chose LiveAssistance, a web-based software package, to provide the service.Ā A training session, including training on how to conduct a chat-based reference interview, was provided for the staff, and multiple advertisements were run in the campus newspaper and on the campus radio station.Ā New mousepads were ordered for the reference computers that displayed the web address for the virtual reference system.Ā Once the service became established, however, much of this effort died down.Ā Training sessions were provided for new staff only and marketing was limited to the libraryÆs homepage and the newsletter provided annually to faculty.Ā Apart from the statistics and user surveys generated by the Live Assistance software, very little evaluation was being done of the service.Ā The authors chose to do this study in part to try and obtain a detailed and accurate report of the current state of virtual reference at LSU, to inform future decisions on how to improve the service and make it more visible to our patrons.

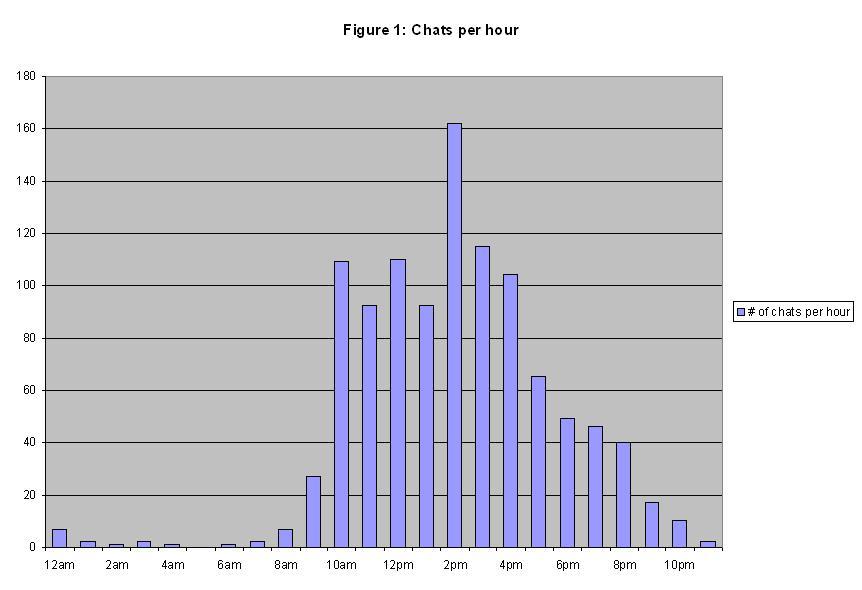

The virtual reference service is staffed 41 hours a week by graduate assistants, library assistants, librarians, and the head of the Reference Department.Ā Librarians and staff log on for one-hour incremental shifts from their office computers.Ā Service is not offered during the weekend and the hours may vary slightly during the holidays and intersession periods.Ā The breakdown of chat session traffic indicates that most patrons use the service between 2:00p.m.¢ 4:00p.m., an average of 162 sessions occur between 2:00p.m.-3:00p.m. and 115 sessions occur between 3:00p.m.¢ 4:00p.m. (See Figure 1: Chats per hour)

Patrons are also attempting to log on between 8:00p.m.¢ 9:00p.m. when the service is not available.Ā The largest amount of chat sessions occurred on Mondays and Wednesdays (See Figure 2: Chats per day).Ā Out of the days the service is offered, Fridays received the least amount of chat sessions.Ā The Friday data is interesting.Ā Virtual reference service is only available for three hours and more chat sessions occurred per hour on Fridays than any other day of the week.

One area in which we feel we can make some improvement is the marketing of our VR service.Ā When the service was first implemented, numerous advertisements were run in both the campus newspaper and on the campus radio station.Ā Since then, however, we have relied primarily on the libraryÆs webpage to get the word out.Ā An analysis of the optional exit surveys completed by patrons during the 2005-2006 school year shows that the overwhelming majority (134 out of 164 respondents, or 82%) reported that they discovered our service through the webpage.Ā The next largest source of information was from a teacher in class (11 out of 164, or 7%).Ā Other sources that were represented included word of mouth and presentations done by the library at Freshman Orientation or other such events, but the numbers were small.Ā A more comprehensive marketing plan could go a long way toward improving utilization of the service.

In their study of virtual reference service in their university library, De Groote, Dorsch, Collard, and Scherrer stress ōthe importance of assessment and evaluation in the planning, implementation, and provision of digital servicesö (2005: 436). Different libraries that have studied the questions they have received via digital reference have used different categories to code their results. The De Groote, et al. study divided the questions into the categories of directional, ready reference, in-depth/mediated, instructional, technical, accounts status, and other, for example. They also found that, as they had suspected it would, the frequency of use of the virtual reference service was tied in with the academic year, with more frequent use when the semesters began (De Groote, et al: 2005).

David Ward raises the question, ōShould chat reference answer everything that in-person reference does?ö (2004: 46). Ward also observes that, ōEvery library has its own work environment and matching service goalsö and states that ōindividual libraries must look at their own servicesö (2004: 47). He suggests studying the impact and the success of virtual reference services in a particular library by examining the accuracy of the questions, how satisfied the users are with the service, and the specific parts of the service (2004).

Julie Arnold and Neal Kaske suggest that, ōChat service transcripts provide an excellent way to examine the quality of reference transactions for any library or group of libraries. Analysis of the transactions in this way makes it possible to gain an understanding of how questions are posed and how they are answered in a way that has not been possible in the pastö (2005: 177). They give a definition of virtual reference as ōsynchronous, online, interactive (chat) reference service and excludes asynchronous modes of digital reference, such as e-mail or Web formsö (2005: 178).Ā Arnold and Kaske also point out that most studies thus far have focused aspects of virtual reference such as when is it used the most, who uses it, response times, what kinds of questions were asked, and how accurate were the responses to those questions. The categories for questions used in Arnold and KaskeÆs study were directional, ready reference, specific search, research, policy and procedural, and holdings/do you own (179-10). ōOff-campus users would first have to travel to campus, find parking, find a library, and then pose their question. The Web is clearly a logical and more convenient decision on their partö (181). The study also found that, ōmost of the questions were policy and procedural, and the smallest number of questions was in the category of research questionsö (181). The researchers found that the users who asked questions via virtual reference did not all expect the same things from the virtual reference service, though finding out exactly what was expected was not one of their research questions (Arnold and Kaske, 2005).

According to Ronan, Reakes, and Cornwell, ōchat reference services have reached the second wave of development and investigationö (2002/2003: 226-227), and that now we can focus more on the quality of the reference service that we are providing to our patrons via chat reference and on looking for ways to evaluate the level of reference service given, instead of focusing on the technical aspects of the software itself, and making certain that we are providing the same level of reference service via chat reference that we are in face-to-face reference (2002/2003).

The virtual reference transcripts from the 2005-2006 school year were collected and analyzed.Ā Our chat software allows us to filter by successful (defined as a successful initial connection) chats only.Ā This allowed us to filter out attempts to connect outside of operating hours or other unsuccessful calls.Ā The resulting 349 transcripts were analyzed and coded independently by two librarians and a graduate assistant from the LSU School of Library Science.

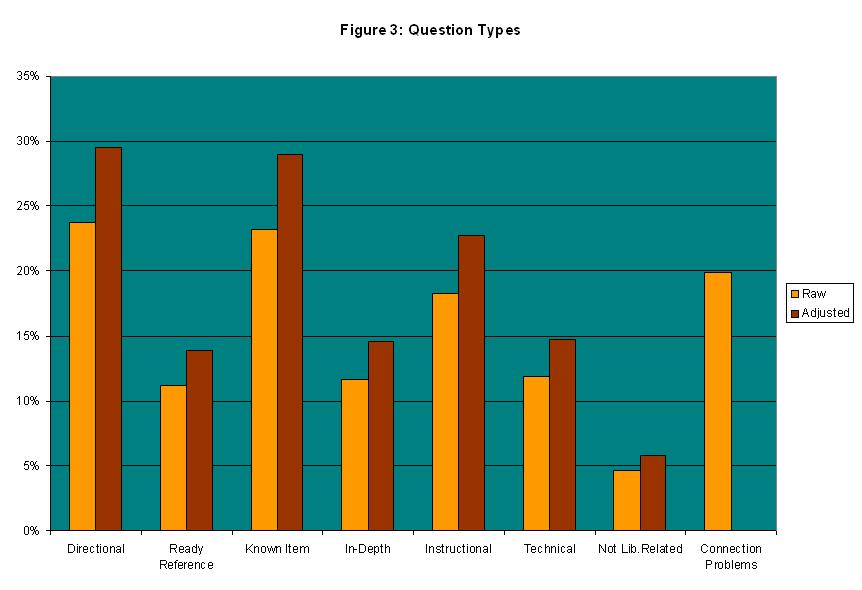

In his book, McClure presents a number of areas in which virtual reference transcripts may be analyzed.Ā When deciding what to code for, we pulled several of these areas and adapted them to our purpose.Ā The transcripts were coded in two different areas: the type of question, and the nature of the answer, which we called by the title of Customer Service.Ā Under Types of Questions (See Figure 3: Question Types) we placed transcripts in the following categories:

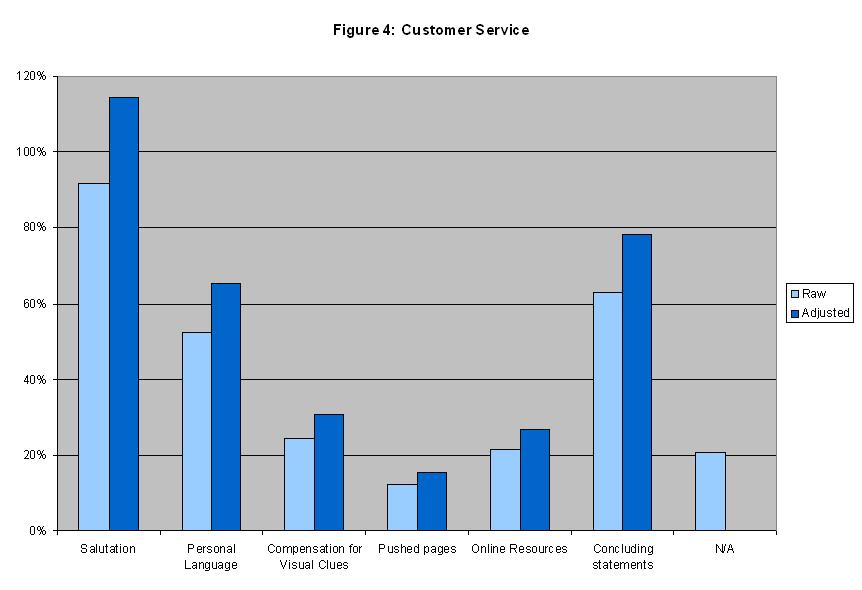

Under the area which we, for lack of a better title, called ōCustomer Service,ö transcripts were placed in categories based both on the performance of the librarian and on what chat features and resources were used. (See Figure 4: Customer Service)Ā The following categories were used:

In both coding areas, a transcript may be placed in more than one category if they occurred in the same chat session.Ā Once the coding was compiled for all three coders, an average was taken for each category to obtain values that did not reflect personal bias in coding.

In order to get the most accurate measurement possible of subjective decisions like question categories and service quality judgments, all three authors independently coded the 349 transcripts used in this study.Ā The totals for each category were averaged to get an overall picture of the transcripts.Ā Several notable trends were discovered.

Due to the problems weÆd been having with the server, a full 20% of the collected transcripts presented some sort of connection issues, and as such, were not coded.Ā Of the remaining 280 transcripts, the largest category was directional or general information inquiries, with 30%.Ā Following close behind were known items, with 29%.Ā Twenty-three percent of the questions involved instruction on how to use the library resources, and ready reference, technical issues, and in-depth research accounted for 14%, 15%, and 15% respectively.Ā Only 6% of the questions were not library related and were thus referred to some other resource.

On the customer service side of things, the librarians were excellent about greeting the patron (in the adjusted figures, the percentage was above 100% because many of the librarians greeted patrons who were unable to connect or were subsequently disconnected).Ā They were somewhat behind in providing adequate closing languages, such as thanking the user or inquiring if there were any more questions.Ā Only 78% of the transcripts coded included this kind of language.Ā Slightly behind this was the use of personal language, such as referring to the patron by name, with 65%.Ā Only 31% of the transcripts included compensation for visual cues such as ōplease wait while I check the catalog,ö or ōhold on just a minute while I get that number for you.öĀ While the number may be skewed to the low side by certain directional and ready reference type questions in which the librarian may answer quickly without needing to resort to such language to keep the patron informed of the search progress, it is nonetheless clear that some improvement must be made.Ā In the digital world of chat, the patron cannot tell if the librarian is working diligently on their question when the dialogue stops.Ā Thus, we need to periodically let patrons know we have not forgotten them and we are working on a resolution to their inquiry.Ā In our library, we have found that in greeting, and to a lesser extent, thanking the patron we are doing very well.Ā Some improvement can be made in encouraging our librarians to use personal language when interacting with patrons, and extra training is required to remind our librarians how to keep the patron informed at all stages of the process.

As a side note, we were able to discover that the ōpushing pagesö feature of our chat software was vastly underutilized, with only 15% of the transcripts utilizing that feature.Ā In addition, a lower-than-expected 27% of the transcripts made use of online resources beyond the library webpage and catalog.Ā This is consistent, however, with directional/informational and known-item requests being the two largest categories of question ¢ both of which may be answered through the information on the library webpage and in the catalog.

The trends that were discovered through the analysis of these transcripts highlight several areas of possible improvement.Ā The high number of informational and known-item questions suggest that we may benefit from redesigning our webpage to make information such as the libraryÆs hours and circulation policies more visible.Ā Also, a number of inquiries from patrons displayed a need for instruction in using the online catalog.Ā Perhaps an interactive tutorial on the website and greater emphasis on instruction in catalog use in the reference interview and in classroom information literacy sessions would help to solve this problem.Ā The low numbers in the other categories tell us that many people do not realize that the chat reference may be used for more in-depth questions.Ā Certainly there comes a point at which the reference interview would be better conducted in person, but an advertising campaign that lets students know that they can get started on their research before they even come to the library may be beneficial to some, particularly those who tend to visit the library at the last minute.Ā

Arnold, J., & Kaske, N. (2005). Evaluating the Quality of a Chat Service [Electronic version]. Libraries and the Academy, 5(2), 177-193.

Groote, S. L. D., Dorsch, J. L., Collard, S., & Scherrer, C. (2005). Quantifying Cooperation: Collaborative Digital Reference Service in the Large Academic Library [Electronic version]. College and Research Libraries, 66(5), 436-454.

Janes, J., (2003). Introduction to reference work in the digital age. New York: Neal-Schuman.

McClure, C. R., Lankes, R. D., Gross, M., & Choltco-Devlin, B. (2002). Statistics, measures, and quality standards for assessing digital reference library services: Guidelines and procedures. New York: Syracuse University.

Ronan, J., Reakes, P., & Cornwell, G. (2002/2003). Evaluating Online Real-Time Reference in an Academic Library: Obstacles and Recommendations [Electronic version]. Reference Librarian, (79/80), 225-240.

Ward, D. (2004). Measuring the Completeness of Reference Transactions in Online Chats: Results of an Unobtrusive Study [Electronic version] Reference & User Services Quarterly, 44(1), 46-52.